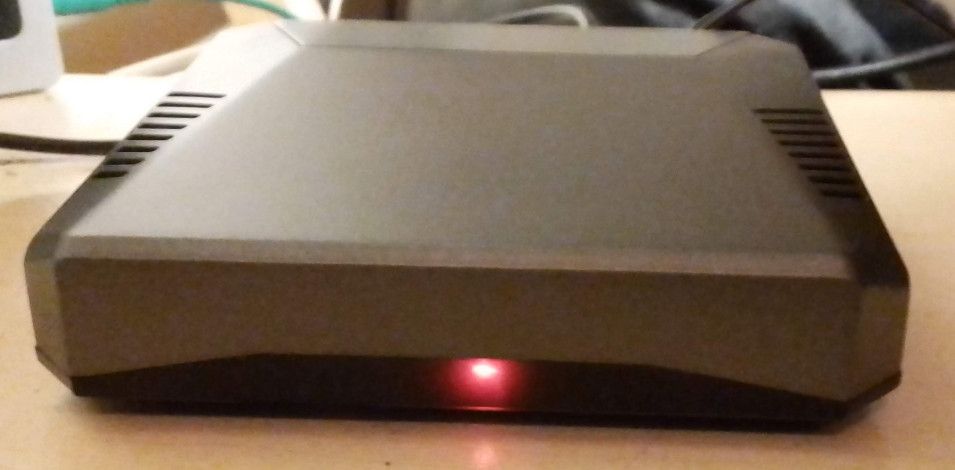

Just bought an even heavier graphics card, the Radeon RX580. Reason for this is because i’m trying to get a local, so non-cloud, non-internet connected version of Microsoft’s Copilot. “Chat with your files” yeah i want that but in a secure setting, i can still switch to internet version of the model if i need AI to retrieve internet information. I sincerely do not like things that are on an always-on-bases, also known as background services.

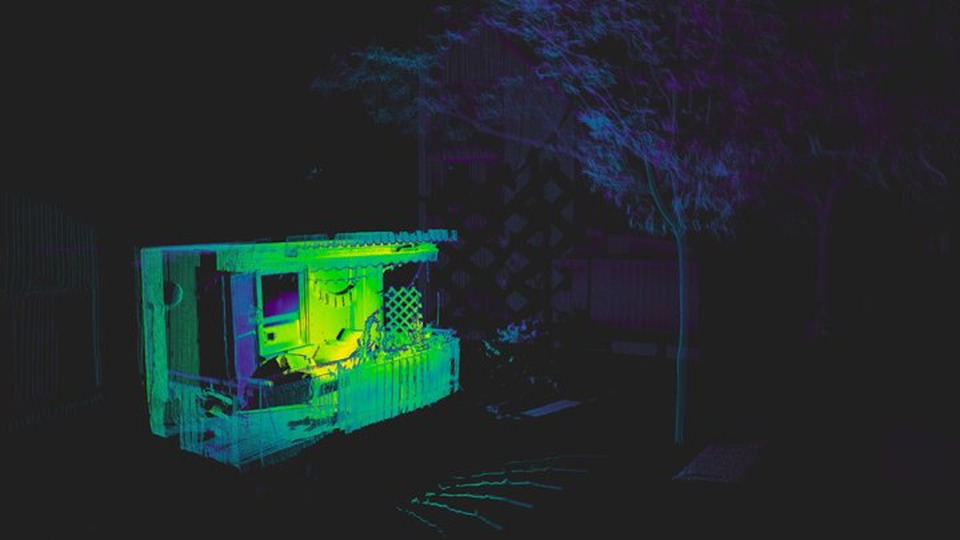

Also needed for my Blender course, did a testrender, took way too long, this one packs a much needed hardware punch:

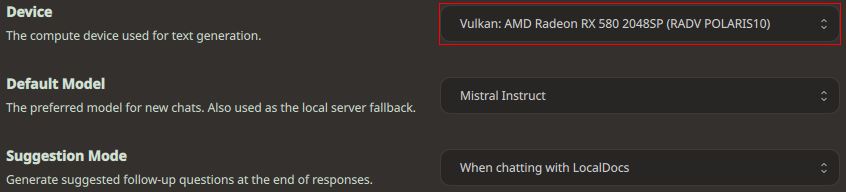

Card uses Vulkan for textbased AI: